List items

Items from the current list are shown below.

Blog

All items from April 2021

18 Apr 2021 : This site won't be contributing to Google FLoC's profiling #

You can see how this can be presented as a privacy-win for users, if their browsing history no longer needs to be collected by random companies.

On the other side of the argument is the EFF, claiming that FLoC is a terrible idea. They cite a number of reasons for this. First, they claim that passing the group identifier of a user to a third party is actually just offering a new way for those third parties to fingerprint users, especially if the group doesn't contain many users. Second, they say that even though FLoC may prevent individual tracking, it nevertheless exposes information about a user to third parties. This isn't a big reveal, given that this is the stated purpose of FLoC, but it does bring into question the real privacy benefits that the approach is supposed to bring. Third, they highlight the fact that FLoC fails to mitigate the more tangible negative implications of targetted advertising and profiling in general, such as bias that leads to inequality and persecution. Since these are consequences of behavioural profiling, rather than the means of achieving it, solutions such as FLoC will always find it hard — if not impossible — to avoid them.

I'm a privacy-rights fundamentalist, but I also believe that in many cases privacy violations are caused by overreach rather than any fundamental need. In fact, in the majority of cases where privacy violations occur, my biggest frustration is that privacy-invasive techniques are chosen in favour of privacy-preserving ones, even though the stated aims are achieved by both. This is the clearest indicator of bad-intent that I know of, and is also a trap many organisations fall into.

A good recent example of where technology was used to achieve an important end without overly impacting user privacy was with the GAEN Contact Tracing protocol, which I wrote in favour of at the time. A good recent example of where a privacy-invasive technology was chosen unnecessarily, which I took to be an indication of bad-faith, was with the UK's Contact Tracing protocol, which failed to make use of the privacy-preserving protocols that were readily available.

So, to be clear, I'd be in favour of FLoC if it was a neat technological solution that addressed privacy-concerns while still allowing targetted advertising to work.

Sadly, this isn't one of those cases, and my view falls squarely in line with the EFF's. Allowing third parties access to behavioural labels based on the sites a user has visited is a horrible intrusion of privacy, even if it's not sending the individual URLs that have been visited. I do not want data about my behaviour collected or shared with anyone; at least not without my explicit consent.

Google is trying to walk a fine line here, following the trend set by other browsers in blocking third-party tracking cookies, while not wanting to dent its advertising revenue or that of its partners. But to me the privacy-preserving rhetoric looks more like a gimmick than a reality: an attempt to placate those asking for better privacy, while hiding the real consequences of FLoC in amongst the technical detail.

However, this might change. I was trying to think of scenarios in which other browser manufacturers were somehow incentivised to introduce the Privacy Sandbox. The obvious one is that websites start to demand it in an attempt to push up their own revenue from adverts. If users of Firefox start to see a lot of "Chrome-only" websites blocking any user that doesn't have an active Privacy-Sandbox, then users will also start to demand it as a feature, at which point we could see other browser developers introducing it.

So, right now, as long as you're not really tied in to Chrome and Android, you still have some choice over this as a user. But if you were paying close attention during the preceding paragraphs, you'll have noticed that it's not just users who have to be concerned about this. Webmasters also have a role here, because unlike tracking cookies which are only served by sites which invite them in (e.g. by including advertising or Google Analytics on the page), FLoC will track all of the webpages a user visits, independent of whether the website requests it or not.

In practice I see so many sites using Google Analytics, even sites that are championing online privacy, that it's reasonable to assume Google is already able to track you with this level of granularity already, and that most webmasters are okay with this.

So, it's hard to frame this as being bad for website owners. After all, if a user wants to have the sites they visit tracked, then that's not really a decision for the site or not. Nevertheless it introduces a new dynamic that webmasters should be aware of, especially for sites that contain sensitive material (i.e. material that they think their users won't want tracked) and where their users may not be aware of the privacy implications of browsing the site with the Privacy Sandbox enabled.

My homepage certainly doesn't fall into that category of sites, but I've nevertheless worked hard to make the site respect my visitors' privacy. As someone who considers privacy to be a human right, respecting the privacy of my visitors is a minimal bar that I think every site should aspire to reach.

As a consequence I'm also requesting that FLoC not include visits to this site as an input to its profiling algorithm. Any webmaster can do this by adding the following line to their site's headers.

The fact that this is opt-out for sites, rather than opt-in, is frustrating, but also not at all surprising. FLoC is just one of the many proposals for how to use Google's Privacy Sandbox for tracking users. This header is FLoC-specific, and so I certainly hope I don't have to introduce new headers for every random technology that every random advertising company decices to try to deploy. But if that's the direction things go, then I will.

Comment

What the FLoC?

There's been a lot of noise in the technology press recently about Google's FloC proposal. On one side we heard from Google that they would be turning off third party cookie support in Chrome. This would improve privacy by preventing advertisers from tracking individual users as they traverse different sites across the Web. Google, which gains the majority of its income from advertising (81% of revenue in 2020), were introducing what they refer to as the Privacy Sandbox to Google Chrome to counteract the impact of no longer being able to track users, and allow behavioural advertising to continue without infringing their users' right to privacy. The Privacy Sandbox is more of an initiative than a technology. The technology that will replace tracking using third-party cookies and user fingerprinting has yet to be fully decided, but one of the options with the most momentum, given that it's been developed by Google itself, is called FLoC (Federated Learning of Cohorts). This paper released by Google provides a good summary of the way it's supposed to work. In short, FLoC shifts the process of assigning behavioural labels from the advertising companies' servers to the client browser. This requires some clever algorithms, because an individual browser doesn't have access to data from other users. It essentially reverses the process: rather than collecting data about all users into one place (the advertisers' servers) so that it can be categorised into groups that are labelled based on behaviour, it instead sends the labels to the browser, where the browser then does the work of determining which labels apply based on the sites the user visits, allowing the browser to pick the most appropriate group. The user's group is then sent to the advertisers so that they can target their ads more effectively, while the user's complete viewing history never has to leave their browser. One notable facet of this approach is that FLoC is able to derive its labelling from all the sites a user visits, not just those serving third-party cookies as is the case now.You can see how this can be presented as a privacy-win for users, if their browsing history no longer needs to be collected by random companies.

On the other side of the argument is the EFF, claiming that FLoC is a terrible idea. They cite a number of reasons for this. First, they claim that passing the group identifier of a user to a third party is actually just offering a new way for those third parties to fingerprint users, especially if the group doesn't contain many users. Second, they say that even though FLoC may prevent individual tracking, it nevertheless exposes information about a user to third parties. This isn't a big reveal, given that this is the stated purpose of FLoC, but it does bring into question the real privacy benefits that the approach is supposed to bring. Third, they highlight the fact that FLoC fails to mitigate the more tangible negative implications of targetted advertising and profiling in general, such as bias that leads to inequality and persecution. Since these are consequences of behavioural profiling, rather than the means of achieving it, solutions such as FLoC will always find it hard — if not impossible — to avoid them.

I'm a privacy-rights fundamentalist, but I also believe that in many cases privacy violations are caused by overreach rather than any fundamental need. In fact, in the majority of cases where privacy violations occur, my biggest frustration is that privacy-invasive techniques are chosen in favour of privacy-preserving ones, even though the stated aims are achieved by both. This is the clearest indicator of bad-intent that I know of, and is also a trap many organisations fall into.

A good recent example of where technology was used to achieve an important end without overly impacting user privacy was with the GAEN Contact Tracing protocol, which I wrote in favour of at the time. A good recent example of where a privacy-invasive technology was chosen unnecessarily, which I took to be an indication of bad-faith, was with the UK's Contact Tracing protocol, which failed to make use of the privacy-preserving protocols that were readily available.

So, to be clear, I'd be in favour of FLoC if it was a neat technological solution that addressed privacy-concerns while still allowing targetted advertising to work.

Sadly, this isn't one of those cases, and my view falls squarely in line with the EFF's. Allowing third parties access to behavioural labels based on the sites a user has visited is a horrible intrusion of privacy, even if it's not sending the individual URLs that have been visited. I do not want data about my behaviour collected or shared with anyone; at least not without my explicit consent.

Google is trying to walk a fine line here, following the trend set by other browsers in blocking third-party tracking cookies, while not wanting to dent its advertising revenue or that of its partners. But to me the privacy-preserving rhetoric looks more like a gimmick than a reality: an attempt to placate those asking for better privacy, while hiding the real consequences of FLoC in amongst the technical detail.

The practicalities

If you're a Google Chrome or Chromium user, then you need to consider the implications of FLoC. If you're using some other browser (e.g. Firefox, Brave, Safari, etc.) you're almost certainly clear of it for the time being. The main non-Chrome browsers have been blocking third-party tracking cookies by default for some time already. The chance of them introducing FLoC in the near future seems small, and in fact right now there's very little incentive for them to do so. Unlike Google, they aren't reliant to the same extent on advertising for their revenue streams.However, this might change. I was trying to think of scenarios in which other browser manufacturers were somehow incentivised to introduce the Privacy Sandbox. The obvious one is that websites start to demand it in an attempt to push up their own revenue from adverts. If users of Firefox start to see a lot of "Chrome-only" websites blocking any user that doesn't have an active Privacy-Sandbox, then users will also start to demand it as a feature, at which point we could see other browser developers introducing it.

So, right now, as long as you're not really tied in to Chrome and Android, you still have some choice over this as a user. But if you were paying close attention during the preceding paragraphs, you'll have noticed that it's not just users who have to be concerned about this. Webmasters also have a role here, because unlike tracking cookies which are only served by sites which invite them in (e.g. by including advertising or Google Analytics on the page), FLoC will track all of the webpages a user visits, independent of whether the website requests it or not.

In practice I see so many sites using Google Analytics, even sites that are championing online privacy, that it's reasonable to assume Google is already able to track you with this level of granularity already, and that most webmasters are okay with this.

So, it's hard to frame this as being bad for website owners. After all, if a user wants to have the sites they visit tracked, then that's not really a decision for the site or not. Nevertheless it introduces a new dynamic that webmasters should be aware of, especially for sites that contain sensitive material (i.e. material that they think their users won't want tracked) and where their users may not be aware of the privacy implications of browsing the site with the Privacy Sandbox enabled.

My homepage certainly doesn't fall into that category of sites, but I've nevertheless worked hard to make the site respect my visitors' privacy. As someone who considers privacy to be a human right, respecting the privacy of my visitors is a minimal bar that I think every site should aspire to reach.

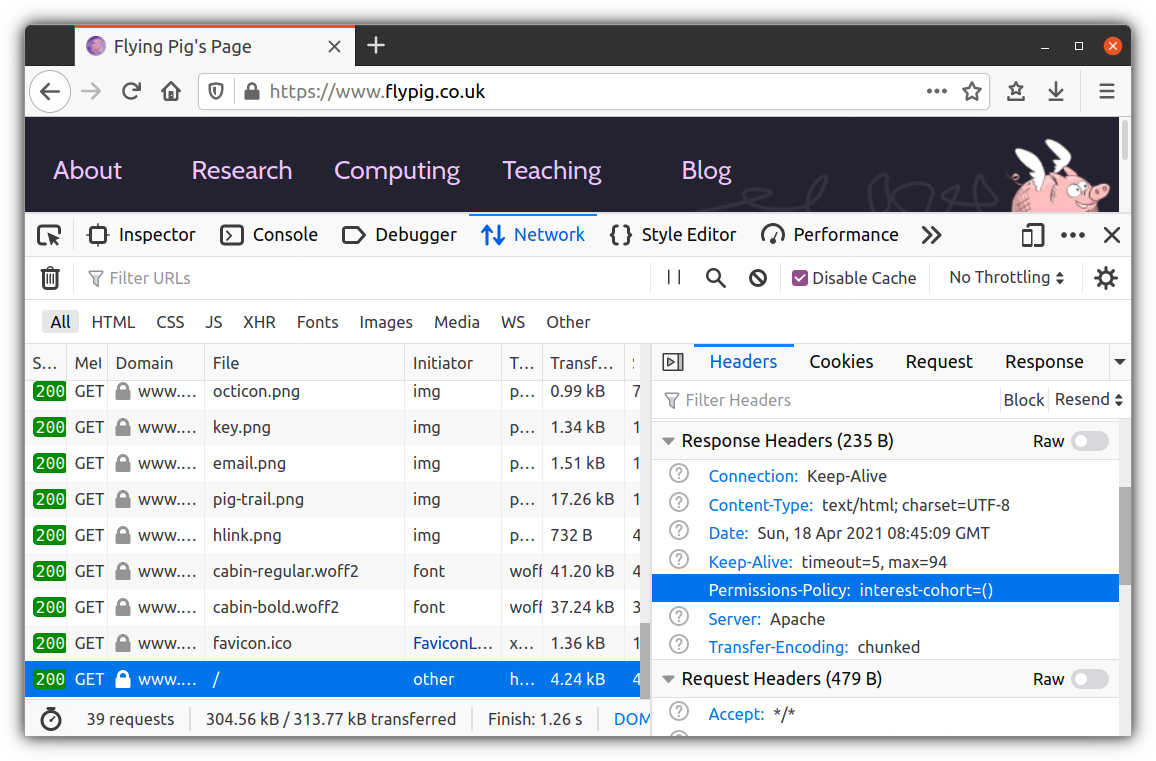

As a consequence I'm also requesting that FLoC not include visits to this site as an input to its profiling algorithm. Any webmaster can do this by adding the following line to their site's headers.

Permissions-Policy: interest-cohort=()

The fact that this is opt-out for sites, rather than opt-in, is frustrating, but also not at all surprising. FLoC is just one of the many proposals for how to use Google's Privacy Sandbox for tracking users. This header is FLoC-specific, and so I certainly hope I don't have to introduce new headers for every random technology that every random advertising company decices to try to deploy. But if that's the direction things go, then I will.